All these restrictions can be easily adapted to fix other requirements, ans then the main learning process has not to be changed, only the setup. That is, neurons from input layer will take only boolean values (\(0\)/\(1\)) and the return will be a boolean string. As a first approach, we will use them for computing boolean functions,

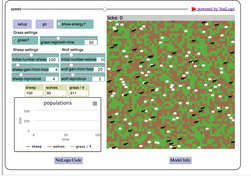

The model we will prepare here is a slightly variant of that coming with the NetLogo official distribution. Although it lacks some good features (for example, we can't control the learning process when we need several hidden layers to model more complex problems because the bad composition of Gradient Descent Method for tuning weights), it can solve a lot of interesting problems and offers a very visual way to understand the fundamentals of this area of Machine Learning.Īs you can find a lot of good resources out there about the basics of ANN (better than I can write here) we will focus this post only in the implementation in NetLogo of a more or less flexible multilayer perceptron network, hoping that the use of agents and links in the model can help the reader to understand the central ideas of how it works. indeed, they are the basement of the new DNN methods and it is still appearing in some phases of them. The Multilayer Perceptron is one of the main ANN structures in use today. my excuses for those of you awaiting for something about Deep Neural Networks (DNN, as those used in AlphaGo from Google, or CaptionBot from Microsoft), maybe in a later post I will try to extract the main features of some convolutional neural network and test it on a very simple NetLogo model, but I am afraid that we will need too many computational resources to obtain anything of interest with this tool.

We will restrict ourselves to the more common and classical ones, the Multilayer Perceptron Network. As a way to continue with AI algorithms implemented in NetLogo, in this post we will see how we can make a simple model to investigate about Artificial Neural Networks (ANN).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed